Get in touch: +1 888 904 4161

.jpg)

NEW YORK, NY — December 1, 2025 — A new Juris Education survey reveals that despite using AI for academic support, writing help, and application preparation, aspiring lawyers do not trust the technology for emotional support.

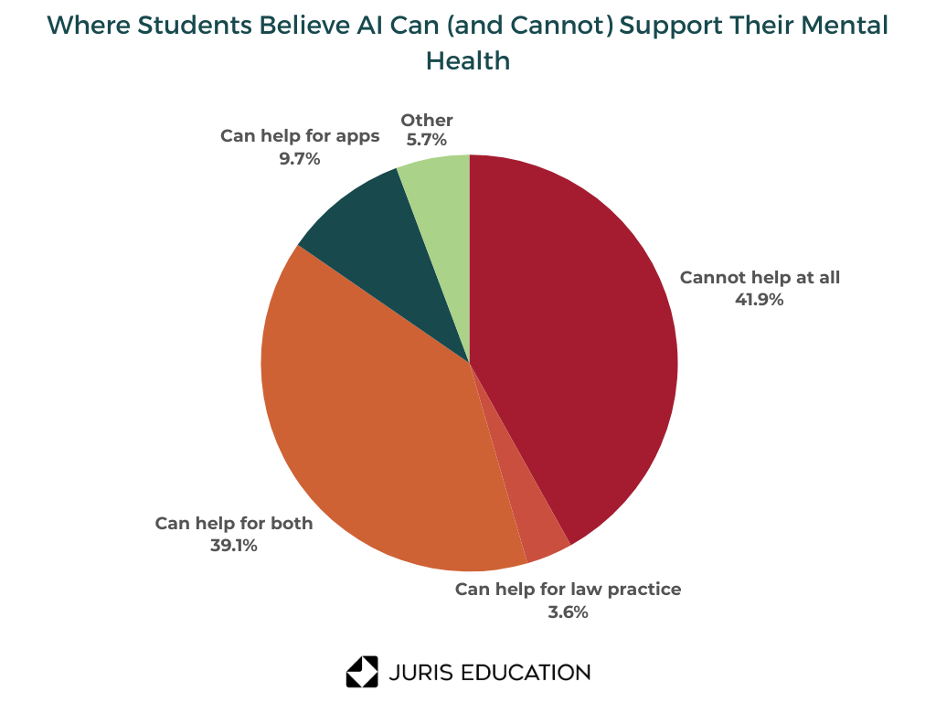

Out of the 248 pre-law students interviewed, 41.9% said they don’t believe AI tools can improve their mental health either during the law school application process or once they become practicing attorneys. By comparison, 39.1% felt AI could be helpful in both stages.

The survey came at a time when we’re seeing increasing reliance on AI for emotional and mental health support. In one recent study published by JMIR Mental Health, 28% of community members said they used AI tools for quick emotional support or as a kind of personal therapist or coach. According to the Juris Education survey, 96% of aspiring lawyers feel at least occasional overwhelm, and almost 70% have considered seeking therapy at some point in the admissions cycle. Students clearly Yet, they do not believe AI is the right source for it.

A significant reason aspiring lawyers likely hesitate to use AI for mental health is the belief that the technology simply gets things wrong too often. Many students said they have already seen AI make factual mistakes, overlook nuance, or offer feedback that feels too generic or surface-level for something as personal as stress and anxiety. Several respondents flagged concerns about “hallucinations,” “inaccurate or unchecked information,” and the need for constant “fact-checking and understanding the limitations of AI” to avoid mistakes.

OpenAI’s own evaluations show that large language models sometimes misinterpret emotionally sensitive messages or fail to recognize when a user’s wording indicates distress. In controlled testing, the models occasionally provided incomplete or non-escalatory responses in situations where a trained therapist would ask deeper questions or take immediate action.

The survey shows that many students already feel uneasy about putting mental health details into a system they do not fully understand. In fact, 52.8% said they feel uncomfortable or strongly opposed to sharing sensitive emotional information with AI.

This hesitation mirrors broader concerns documented across legal education. The American Bar Association’s 2025 Legal Technology Survey reports that 71% of legal professionals list privacy and confidentiality as their top barrier to AI adoption.

Even though these students are not yet attorneys, they are already operating in a space where privacy matters. They are preparing personal statements, writing about hardships, navigating character and fitness rules, and managing financial aid information. For many, putting sensitive emotional details into an AI system feels like giving up control at a time when they are already vulnerable.

Interestingly, the reluctance to use AI for mental health does not reflect a general rejection of AI. In the survey:

Jesse Wang, senior admissions consultant at Juris Education and a New York attorney, believes this distinction is intentional.

“Students know AI can help with small, time-consuming tasks. When those tasks disappear, students feel less drained. But emotional support is different. Most students want people involved when the topic is their well-being, and that makes sense,” Wang said.

AI adoption is rising in the legal field. The ABA reports that nearly one in three practicing lawyers already use some form of AI, and usage is growing fastest in larger firms. Students are watching that shift, but they are also watching the limitations.

“We are seeing thoughtful, selective engagement,” said Arush Chandna, co-founder of Juris Education. “Students trust AI for structure, organization, and efficiency. But when it comes to emotional support, they still want something human. Their skepticism is not fear. It is discernment.”

Aspiring lawyers are not rejecting AI. They are defining the boundaries of where it fits. AI may help them draft, plan, prep, and organize, but when the question shifts from productivity to well-being, the tone changes. Students want accuracy, emotional awareness, and the ability to intervene when things feel off. AI simply cannot offer that yet.

Get 7+ personal statement examples written by our succcessful applicants free of charge. No strings attached.